The Creative Technologist Roundup x 17.0

🌟This week’s Creative Tech Roundup is less about new frontiers and more about the great digital clean-up, as major platforms try to make AI play by the rules.

Spotify is finally taking on “AI slop” with a new spam filter and a standardized system for disclosing AI use in music credits, following the removal of over 75 million fraudulent tracks. 🎧 Meanwhile, the code is getting smarter, with OpenAI’s Responses API enabling next-gen agents to remember and follow through on complex, multi-step tasks like a dedicated detective. 💻 On the design front, Figma is bridging the gap between design and code, upgrading its MCP server to allow AI coding agents to directly interact with design files for faster development. ✍️ Not all AI journeys are smooth, however, as Lionsgate’s attempt to create films with Runway AI hit a snag because its massive catalog simply isn’t big enough to train a quality model. 🎬 But the experimentation continues, as Google Labs launched Mixboard, an AI-powered visual concepting board for quickly generating and refining ideas. 💡 The takeaway? The AI gold rush is shifting from launching new models to building the infrastructure and guardrails necessary to make them reliable. ✨

x Macklin Andrick x

Bytes

#TechNews

#UXUI

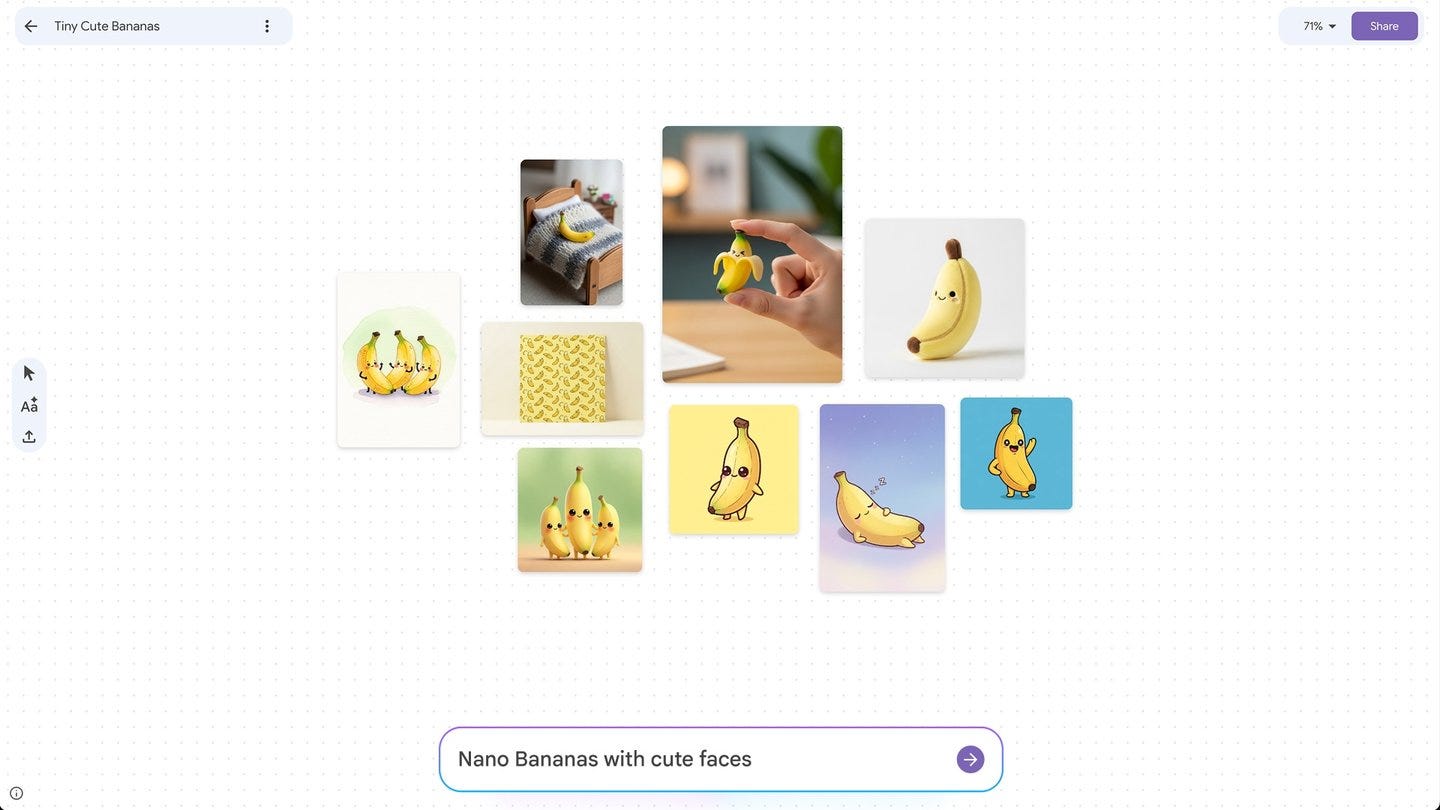

Mixboard is a new way to visualize your ideas from Google Labs.

Google Labs has launched Mixboard, an experimental, AI-powered concepting board designed to help users quickly visualize, explore, and refine their ideas. This open-canvas tool allows users to begin a project with a text prompt or pre-populated board, or by importing their own images. Mixboard’s core functionality leverages generative AI, including the Nano Banana image editing model, allowing users to generate unique visuals and then edit them using natural language commands, such as combining images or making small changes. The platform also includes one-click options like “regenerate” and “more like this” to create new versions of an idea, along with the ability to generate text based on the context of the images on the board. Mixboard is currently available as a public beta in the U.S. and is intended to make AI a more accessible tool for creative exploration across a variety of concepts, from home decor to product design.

#Music

Spotify is finally taking steps to address its AI slop and clone problem

Spotify recently announced a set of updated policies aimed at combating the misuse of AI-generated music and protecting artists on its platform. The company is strengthening its impersonation policy to provide stronger protections and clearer recourse for artists whose voices have been cloned without authorization. Spotify will introduce a new music spam filter this fall, specifically designed to identify and stop recommending “AI slop,” mass uploads, and other fraudulent content, having already removed over 75 million spammy tracks in the last year. Furthermore, the company is supporting a new industry standard for transparency by displaying specific AI disclosures in music credits. These disclosures, developed with DDEX, will indicate whether AI was used for vocals, instrumentation, or post-production. These measures are intended to maintain trust across the platform and protect artist identity while supporting the responsible use of AI for creative purposes.

#Agents

Why OpenAI built the Responses API

OpenAI has introduced the new Responses API (/v1/responses) as the recommended method for integrating its most advanced models, including GPT-5, built for the “agentic future.” This technical foundation allows the AI to act like a dedicated detective who remembers every step and detail of a conversation, allowing for more reliable, complex, and multi-step interactions. Designed as a structured reasoning and action loop, the Responses API preserves the model’s reasoning state across multiple turns, which improves efficiency and performance, while its native support for multimodal workflows (text, image, audio) makes interactions feel more natural and powerful. Ultimately, this new architecture ensures that future AI-powered features will be more consistent, less error-prone, and significantly more capable of completing long-term projects on their own.

#Design

Figma made its design tools more accessible to AI agents

Figma has significantly upgraded its Model Context Protocol (MCP) server to deeply integrate its design files with AI coding agents and tools like Figma Make. The update moves the MCP server beyond the desktop application to allow remote access from various Integrated Development Environments (IDEs), including Anthropic, Cursor, and VS Code, and browser-based AI models. This change enables AI agents to generate code and interact with prototypes using the structured, semantic data from Figma files—not just screenshots—which is designed to speed up the workflow for developers. Figma’s goal is to create a seamless bridge between design and code, allowing ideas in Figma Make (the platform’s prompt-to-app tool) to evolve into production-ready features without manual rewrites.

#Entertainment

Movie Studio Lionsgate is Struggling to Make AI-Generated Films With Runway

Hollywood studio Lionsgate’s partnership with AI video company Runway has been unproductive over the past year due to limitations in the AI’s training data. The core issue is that the studio’s extensive catalog, which includes franchises like John Wick and The Hunger Games, is too small for the AI to produce quality, full-length generated films. Experts note that AI models require massive amounts of media to create professional content, and even huge collections like the Disney catalog would be insufficient. Furthermore, the project is complicated by the legal “gray area” surrounding actor rights and compensation when their likeness is used in AI-generated clips. This struggle highlights the current technological limits of AI video and the broader legal challenges facing the entertainment industry.

#Code

How Anthropic teams use Claude Code

Anthropic has published an internal guide detailing how its teams utilize the Claude Code command-line tool for agentic coding across various departments. The document outlines how the AI is used by engineers, data scientists, and even non-technical staff to tackle complex projects and automate workflows. Key use cases include: utilizing the AI to quickly navigate massive codebases for new hires, debugging complex infrastructure, and accelerating documentation synthesis. The guide emphasizes that this tool has enabled teams to achieve significant time savings (up to 2-4x), build applications in unfamiliar languages, and shift from building temporary tools to persistent ones.